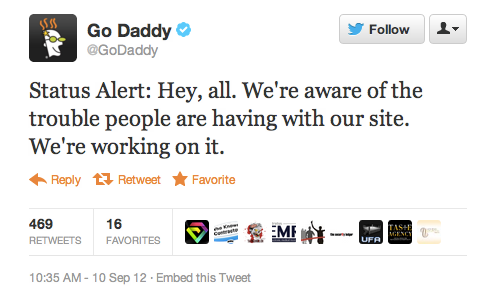

The GoDaddy DNS Outage and Paternity Test: Who’s your GoDaddy?

Its another episode of the Maury Povich Paternity Test on DNS Outage TV yesterday. Having just written about a major AT&T DNS outage on Aug. 15, here we are again on Sept 10, 2012 witnessing the GoDaddy DNS outage. Millions of website and email users DNS look-up process is playing out like a Maury Povich TV episode of paternity testing gone wrong. First time visitors to a GoDaddy website type the GoDaddy URL into their browser and the answer from the DNS comes back “This aint your GoDaddy.” Or something like that.

Dealing with DNS outage Denial

Moreover, last month the DNS outage was with AT&T DNS. So, whats a website owner to do now that another “big daddy” DNS provider is exposed (again) as not completely reliable? One option is to switch to another DNS providers and gamble that “this” DNS provider is somehow immune to the vagaries of the Internet. Or, another option is to stop deluding yourself, grow up and do something realistic about the reality that DNS providers – like everything else on the Internet – are not perfect and never will be. Ladies and gentlemen, our completely not bold prediction is – a major DNS outage will happen again soon.

Get Real with your DNS : Don’t use a Tainted DNS Test Kit

I would argue that a better option is to put in place website monitoring using a “non-cache based” monitoring methodology that will detect DNS issues (you can test using non-cache DNS look-up here – free using Trace Style “DNS”). NOTE: A cache-based monitoring service will not accurately detect DNS issues – only a non-cache based method will. At the end of each paternity test, Maury Povich says “You are the father,” or “You are NOT the father”. Basically, if you’re using a cache-method for monitoring the statement would be more like “You may or may not be a father – we can’t tell.” Not good TV, nor good DNS monitoring.

Acceptance 1: Planning for the DNS Outages of the Future

As I wrote “Doing DNS Monitoring Right: The AT&T DNS Outage” It is not generally well known that the basic synthetic HTTP monitoring methodology for website monitoring come in two “flavors” – to use a “cache” or “non-cache” methodology. The choice of methodology by a monitoring service directly impacts its ability to detect issues on secondary DNS servers, such as the GoDaddy DNS outage and AT&T DNS outage. On the one hand, a cache-based method is far simpler for the monitoring business to implement and costs less to set-up and administer. In fact, most of the low-cost, “basic” uptime monitoring services use a “cache method.”

Acceptance 2: Not GoDaddy DNS, not Nobody DNS is Perfect

Specifically, the reason non-cache is more cost-effective is that when an issue like the GoDaddy and AT&T DNS outages invariably occurs – as when any website error condition occurs – it is the total Time-to-Repair (TTR) which determines the loss due to website downtime. In other words, the total TIME (1) it takes to detect, diagnose, and repair an error the worse the impact of the error. Conversely, the faster a monitoring solution speeds up TTR the more the loss is reduced (or completely avoided).

Okay, I’m owning my DNS – Now What?

Take Action to address your DNS outage Time-to-Repair BEFORE a DNS outage happens again:

Look we all make mistakes. Life, and DNS propagation, just happens. Lets make a few small changes and get on top of this, so the next time it happens it isn’t a big Twitter-feed apology fest and freak out to your website users, okay?

– Error Detection method: Test a non-cache website and DNS monitoring solution that uses a non-cache method to propagate DNS queries all the way through to root name servers with each monitoring instance. A cache-method service caches DNS and therefore will not detect a secondary DNS issue at all, or it may take days or weeks to detect the issue.

-Frequency of monitoring: Use a faster frequency of non-cache monitoring, such as every 1-minute versus once per hour. The faster the non-cache monitoring solution detects and alerts an impacted administrator of a website using a failing DNS service, the faster a switch can be made to a DNS failover provider.

– Frequency of Time-to-Live (TTL) setting: The smaller the value of the Time-to-live (TTL) frequency setting used by DNS administrators to set DNS caching to a secondary DNS server of the a domain name from the primary authoritative name server. Typically set to 86,400 second(1-day) or more, in disaster recovery planning the TTL can be set as low as once every 300 seconds, however the lower the setting the higher the load on the authoritative domain name server.

-Diagnostics – Make sure your website monitoring service provides diagnostics when an error occurs, such as an automatic trace-route at the time of the detected DNS problem. Without diagnostics how will you know what the problem is about? NOTE: Many more basic monitoring services do not provide any diagnostic info.

-Repair: Continue monitoring solution during the error condition to further pinpoint the issue. Send the monitored results to your DNS provider. You can also run free manual non-cache DNS trace-routes here (select Trace Style “DNS”) to verify the issue as needed.

-Prevent: Use a monitoring solution that allows you to view the details of a DNS look-up (such as an actual browser monitoring) in order to see “soft errors” such as slow-down trends and intermittent issues, so you can take action before the “soft error” becomes a “hard error” such as a customer facing downtime.

Next Steps: “And in a few months we’ll follow up and see how they are”

Free Instant non-cache DNS Test here Free Trial non-cache DNS Monitoring here Full DNS Monitoring Account Set-up here

(1) According to organizations that participated in a TRAC Research, September 2011 study, the organizations identified the amount of time spent troubleshooting performance issues as their top challenge with “on average, over a full work-week of man-hours (46.2 hours) spent in war room situations each month.”