API Monitoring: Definition, Metrics, Types & Setup Guide

API monitoring is the continuous, automated practice of validating API endpoints for availability, response time, and data correctness — confirming not only that an endpoint responds, but that it returns the right data, in the right format, within acceptable latency, from the perspective of users and dependent systems.

Top 10 Datadog Competitors & Alternatives in 2026

In this article, we’ll explore the top 10 Datadog competitors and alternatives in 2026, analyzing their key features, pros, and cons to help you find the best fit for your monitoring needs.

What Is Synthetic Monitoring? Types, Metrics, & Best Practices

Synthetic monitoring is a proactive performance testing method that uses scripted, automated transactions to simulate real user interactions with your applications — measuring availability, response time, and functionality before issues

Does website speed affect SEO in 2026?

Website performance is critical to a good customer experience, and the better the customer experience, the better you rank.

11 Web Application Monitoring Best Practices (2026)

Master 11 web application monitoring best practices to reduce MTTR and boost reliability – from synthetic transactions to global monitoring with Dotcom-Monitor.

Best Certificate Monitoring Solutions With Slack/Teams Integration – The Complete Guide

Explore the best SSL certificate monitoring solutions with Slack and Teams integration. Get real-time alerts, track expiry dates, and prevent certificate-related outages.

How much does downtime cost per hour in 2026?

In a recent report by IDC titled, “DevOps and the Cost of Downtime: Fortune 1000 Best Practice Metrics Quantified,” the cost of downtime was explored across Fortune 1000 organizations.

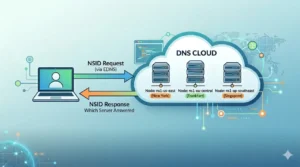

What Is DNS NSID? How to See Which DNS Server Answered

What Is DNS NSID? (Quick Answer) NSID (Name Server Identifier) is a DNS extension that allows a DNS server to include an identifier in its response, revealing exactly which server

SSL Certificate Management – The 2026 Complete Guide

Learn how SSL certificate management works from a monitoring perspective. Track expiry, detect changes, validate chains, and avoid downtime with proactive alerts.

Streaming Video Monitoring: How to Detect Playback Issues Before Viewers Leave

Learn how streaming video monitoring detects buffering, latency, and playback failures before viewers abandon your streams.

How to Monitor SSL Certificate Expiration

Learn how to monitor SSL certificate expiration using free tools, automated tracking, and alerts. Prevent downtime with the best SSL monitoring solutions in 2025.

Website Performance Monitoring, Site Speed and SEO

Site speed is no longer a secondary SEO concern — it’s a confirmed ranking factor. Here’s how continuous website monitoring keeps your Core Web Vitals healthy, your uptime reliable, and your search visibility strong.